Re-decorate Your Room – But Don’t Leave The House – Use Your Phone!

September 24, 2010Selling Luxury Goods in a Shabby Market

October 7, 2010Sentiment Analysis is the hot new buzz phrase in the world of internet marketing metrics. With the rise of social media importance in marketers’ lives, it naturally follows suit that we want to measure whether people are talking favorably or unfavorably about our brands. And, when the number of social media conversations grows beyond the ability of simple manual tracking, we look for automated capabilities.

The problem, though, is that today the state of the art of social media sentiment analysis is not technologically advanced enough to provide reliably meaningful information. Manual analysis is often still required if we want to ensure the accuracy of the result. At a minimum, automated statistics need to be periodically spot-checked to determine how valid they are.

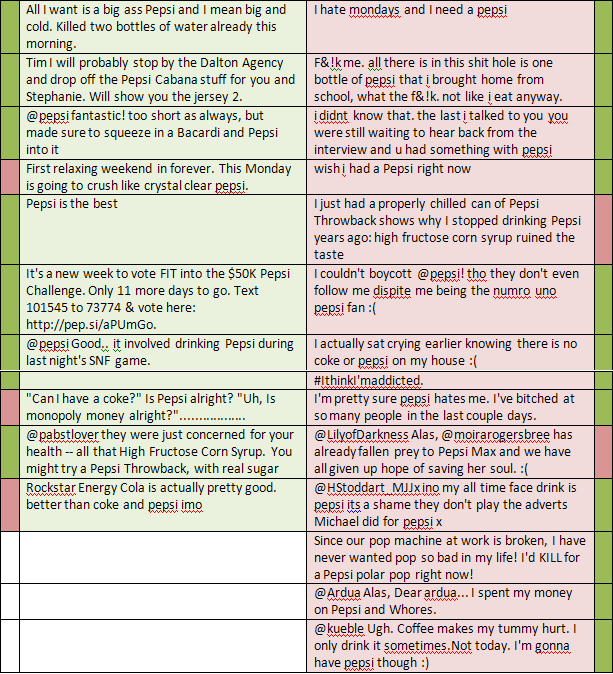

As an example, let’s look at a recent analysis of tweets for a major brand from one of the sentiment analysis engines out there. (I won’t mention which engine so as not to unfairly target one over the others.) The below chart shows the first 23 tweets analyzed for “Pepsi”. Those on the left (shaded green) were determined to have positive sentiment and those on the right (shaded red) were determined to be negative. Next to each is an extra block with a manual determination of the same tweet. Green blocks mean the tweet was manually determined to be positive and red negative. (Although some tweets could also be considered neutral, we kept it simpler – just positive or negative.)

The result? Out of the first 23 tweets returned from the engine, 14 of them (60%) were deemed to be coded incorrectly! In fact, the automated analysis translates to an overall slightly negative sentiment (.43), when in fact the manual analysis showed an overall strong positive sentiment (.78).

Also note that for our analysis, we used a brand name (“Pepsi”) that is relatively easily identifiable by automated systems. But, had we chosen another common brand such as “Coke”, our original problems would have been compounded further. In addition to the same incorrect sentiment classifications, we have two more problems.

- The system will incorrectly include in its analysis tweets that use the same word but not intended to refer to the brand. For example, many tweets about “coke” refer to the drug. (And of course we have to take into account all of the tweets by those in the coal and steel industries referring to “coke” as the result of processing bituminous coal. They’re always skewing our numbers!)

- The system also fails (except with a second pass) to include tweets that use other forms of the brand name – in this case, the more formal “Coca Cola”. (Pepsi is lucky in that both its shortened and full names both contain the word “Pepsi” so internet searches and analyses are easier.)

Does this mean that automated sentiment analysis should be avoided? Not necessarily, but it does mean that they need to be regularly spot-checked by actual people until they are more consistently reliable.

Domus is a leading edge internet marketing agency that brings its full range of classic marketing expertise to its hi-tech digital capabilities. For more information on how Domus can help you accurately analyze your internet presence and develop effective strategies to further your brand, visit us at http://www.domusinc.com.